For years, the internal workings of large language models have been treated as something close to unknowable — a black box that produces outputs without offering much explanation of how it got there. That assumption is now being challenged in earnest, and Alibaba's Qwen team is among the most aggressive forces pushing back against it. Their newly released Qwen-Scope, an open-source suite of Sparse Autoencoders, represents one of the more serious attempts yet to make the interior logic of LLMs legible to the people who build with them.

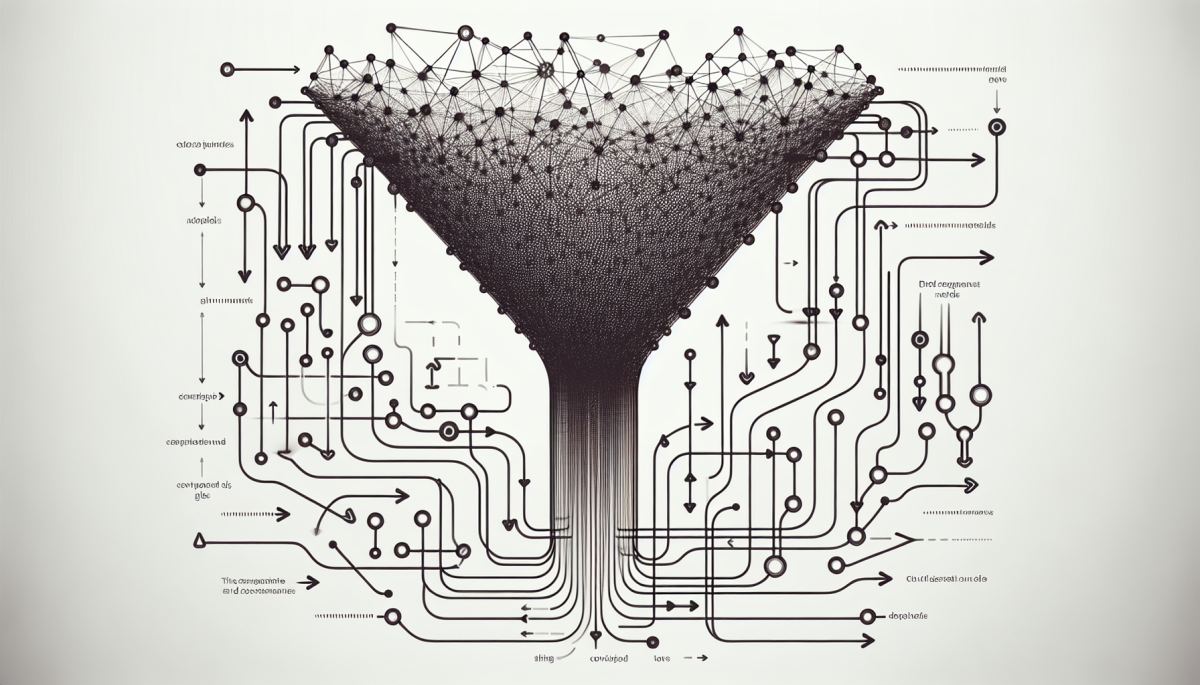

Sparse Autoencoders, or SAEs, are a class of interpretability tool that work by decomposing the dense, high-dimensional activations inside a neural network into a much larger set of sparse, human-readable features. The core idea is that when a model processes language, its internal representations are superimposed — many concepts are encoded simultaneously in the same vector space, making them nearly impossible to disentangle by inspection alone. SAEs force those representations apart, isolating individual features so researchers and developers can actually see what a model is "thinking about" at a given layer. Qwen-Scope applies this approach directly to the Qwen family of models and, crucially, releases the tooling as open-source infrastructure rather than keeping it as internal research.

The timing of this release is not accidental. The field of mechanistic interpretability — the branch of AI research dedicated to understanding how neural networks actually compute — has been gaining momentum rapidly, driven in part by safety concerns and in part by the practical frustrations of developers who cannot reliably predict or control model behavior. Anthropic's work on SAEs for Claude, published in 2024, demonstrated that these tools could identify millions of distinct features inside a frontier model, including some that were genuinely surprising to the researchers themselves. That work generated enormous interest, but it remained largely inaccessible to anyone outside Anthropic's own infrastructure.

Qwen-Scope changes the calculus by making comparable tooling available for a widely used open-weight model family. This matters because interpretability research has historically been bottlenecked by access — you can only study the models you can run, and frontier closed models are off-limits to most researchers. By releasing SAEs trained on Qwen models alongside the tooling to use them, the Qwen team is effectively democratizing a class of research that has been concentrated in a handful of well-funded labs. The downstream effect could be a significant acceleration in the pace at which the broader community understands what these systems are actually doing.

There is also a practical development angle that should not be underestimated. When a model behaves unexpectedly — producing biased outputs, hallucinating facts, or failing on edge cases — developers currently have very limited tools for diagnosing why. SAE-based interpretability offers a path toward something closer to genuine debugging: the ability to identify which internal features are activating during a failure case and, potentially, to intervene on those features directly. This is sometimes called "activation steering," and it is one of the more promising techniques for fine-grained behavioral control without full retraining.

The implications of widely available interpretability tooling extend well beyond cleaner debugging workflows. If developers can reliably identify the internal features that drive specific model behaviors, the nature of AI auditing changes fundamentally. Regulators in the European Union, operating under the AI Act's transparency requirements, have struggled with the question of how to audit systems whose decision-making processes are opaque even to their creators. Tools like Qwen-Scope, if they mature and generalize, could provide the technical substrate for a new kind of compliance infrastructure — one grounded in mechanistic evidence rather than behavioral testing alone.

There is a feedback loop worth watching here. As interpretability tools become more accessible, more researchers will use them, which will generate more findings about how models encode knowledge, bias, and reasoning. Those findings will feed back into training decisions, potentially producing models that are easier to interpret by design — a virtuous cycle that the field has been trying to bootstrap for years. Whether Qwen-Scope becomes a meaningful node in that cycle depends on adoption, documentation quality, and whether the SAEs generalize usefully across model sizes and tasks.

What is already clear is that the black-box era of LLM development is under genuine pressure. The question is no longer whether interpretability tools will become standard infrastructure, but which organizations will shape what that infrastructure looks like — and who gets to use it.

References

- Templeton et al. (2024) — Scaling Monosemanticity: Extracting Interpretable Features from Claude 3 Sonnet

- Bricken et al. (2023) — Towards Monosemanticity: Decomposing Language Models With Dictionary Learning

- Elhage et al. (2022) — Toy Models of Superposition

- European Parliament (2024) — EU Artificial Intelligence Act

Discussion (0)

Be the first to comment.

Leave a comment