The assumption baked into nearly every major language model release over the past three years is simple: more parameters, more compute, better results. It is a rule that has held remarkably well since DeepMind's Chinchilla paper rewrote the scaling playbook in 2022. But a new architecture from researchers at UC San Diego and Together AI is quietly challenging that orthodoxy, and the implications stretch well beyond a single research paper.

The system is called Parcae, and its central claim is striking: a looped language model built on this architecture can match the output quality of a standard transformer that is roughly twice its size. That is not a marginal efficiency gain. If it holds up at scale, it represents a fundamental rethinking of where intelligence lives inside a neural network.

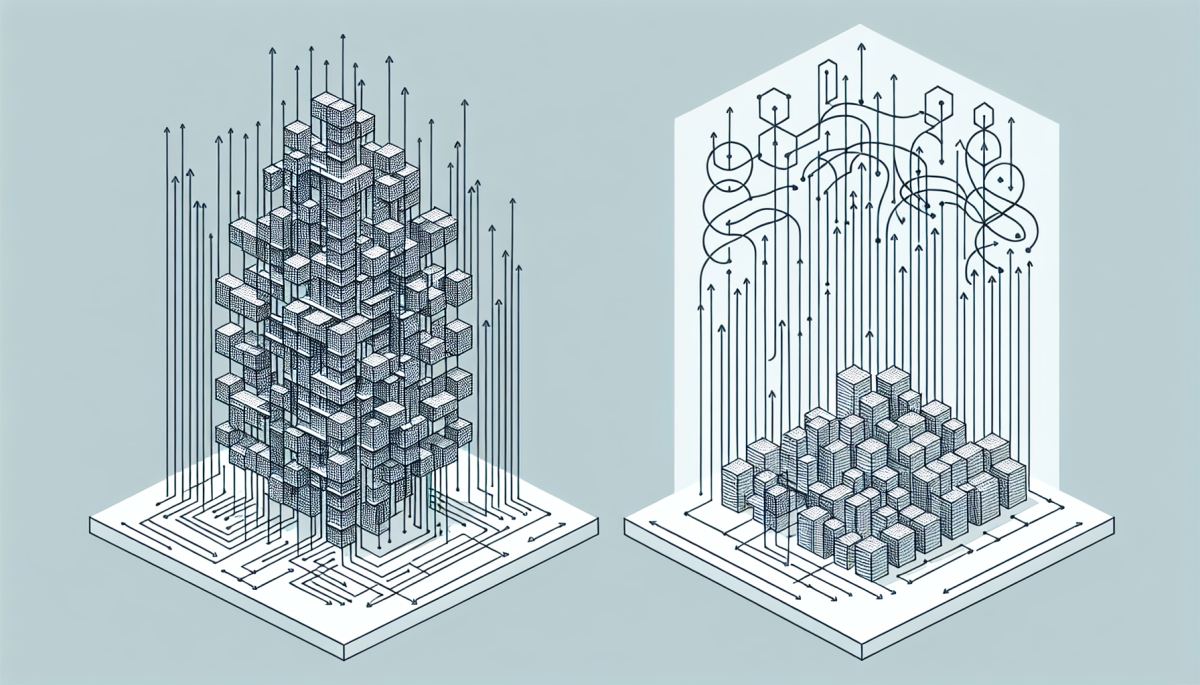

Looped or recurrent-style architectures are not new. The appeal has always been obvious: instead of stacking dozens of unique transformer layers, you reuse a smaller set of layers multiple times, dramatically cutting the parameter count. The problem is that looping tends to make training unstable. Gradients either explode or vanish as they travel through repeated iterations of the same weights, and the model either fails to converge or converges to something mediocre. This is why the field largely abandoned recurrence in favor of the brute-force elegance of deep transformers.

What Parcae appears to have solved, at least in the reported experiments, is that stability problem. The architecture introduces design choices that keep gradient flow well-behaved across loops, allowing the model to actually learn from its repeated passes rather than fighting against them. The result is a model that achieves competitive benchmark performance while carrying significantly fewer parameters into deployment.

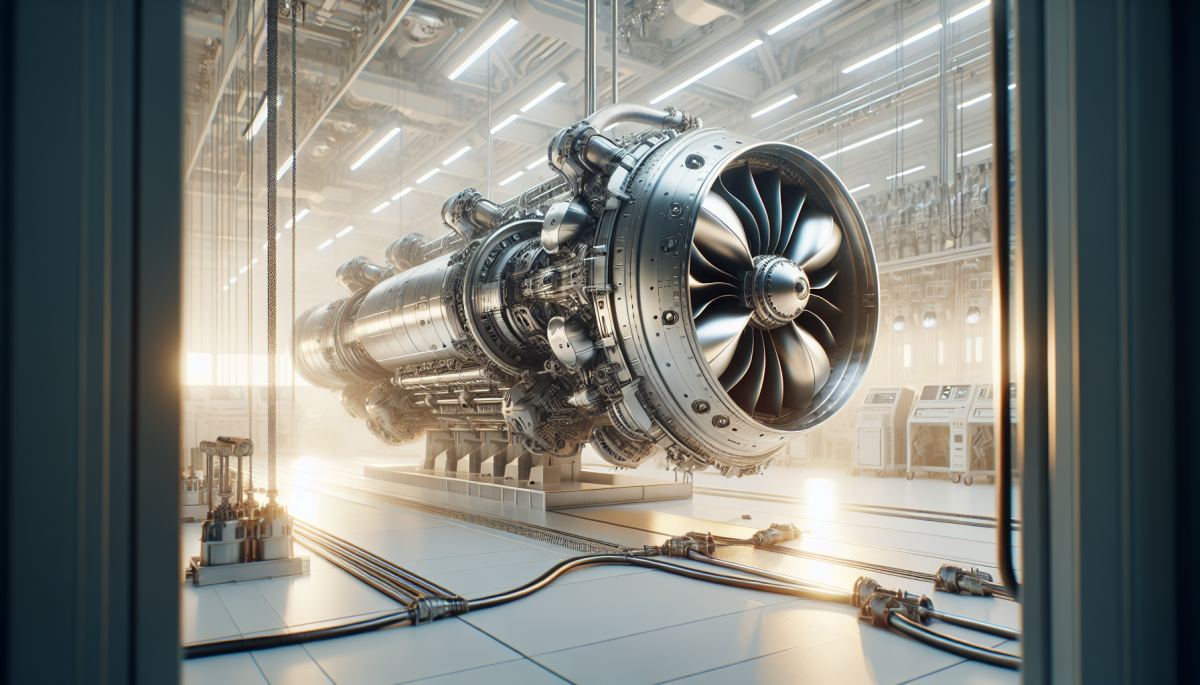

This matters enormously for inference costs. Training a model is expensive, but you do it once. Inference is the cost you pay every single time someone sends a query, and at the scale of modern AI deployments, those costs compound relentlessly. A model that is half the size but performs equivalently is not just cheaper to store — it is faster to run, cheaper to serve, and far more viable for edge deployments where memory and power are hard constraints.

It would be easy to read Parcae as a purely academic contribution, but the institutional context tells a different story. Together AI is a company explicitly built around the idea that open and efficient inference infrastructure matters. Their involvement signals that this research is pointed directly at a real commercial and infrastructural problem: the AI industry is burning through data center capacity at a rate that is starting to alarm even its most enthusiastic investors.

The International Energy Agency projected earlier this year that data centers could account for up to 4 percent of global electricity demand by 2026, with AI workloads as a primary driver. Against that backdrop, any architecture that delivers equivalent model quality at half the parameter count is not just academically interesting — it is potentially a pressure-release valve on a system that is tightening fast.

There is also a subtler dynamic at play. As the largest frontier labs push toward trillion-parameter models, the gap between what they can deploy and what smaller organizations, governments, or researchers can run keeps widening. Efficient architectures like Parcae could partially counteract that concentration of capability, making high-quality language models accessible to institutions that cannot afford to operate at hyperscaler scale. That is a meaningful redistribution of who gets to build with these tools.

The second-order effect worth watching is what happens to the benchmarking culture that has grown up around transformer scaling. If a looped model at half the size can match a standard transformer, then parameter count — long used as a rough proxy for model quality and capability — becomes an even less reliable signal than it already is. Leaderboards and model cards that lead with parameter counts would need rethinking, and the implicit prestige economy around "largest model" announcements would lose some of its footing.

None of this is guaranteed. Research results at the scale reported in a single paper do not always survive contact with the full complexity of production deployment. But the direction of travel is clear: the field is running out of easy wins from simply making models bigger, and the researchers willing to revisit foundational architectural assumptions are the ones most likely to find the next real efficiency breakthrough.

If Parcae's stability gains prove durable as loop counts and model sizes increase, the question will shift quickly from whether looped architectures can work to whether the industry's existing infrastructure investments are optimized for the wrong kind of model entirely.

Discussion (0)

Be the first to comment.

Leave a comment