The infrastructure powering large language models has a geography problem. Ever since transformer-based models became the backbone of commercial AI, the computational work of running them has been tethered to a single location. Prefill, the phase where a model processes your input prompt, and decode, where it generates a response token by token, have both lived inside the same datacenter, often on the same cluster of machines connected by ultra-fast RDMA networking. That constraint was never a design choice so much as a practical ceiling. Now, researchers at Moonshot AI and Tsinghua University are proposing a way through it.

Their system, called PrfaaS, short for Prefill-as-a-Service, reimagines how LLM inference can be split across datacenters rather than confined within one. The core insight is architectural: prefill and decode are fundamentally different kinds of work. Prefill is compute-intensive and bursty, processing potentially thousands of tokens in a single forward pass. Decode is memory-bandwidth-intensive and sequential, producing one token at a time. Treating them as inseparable has forced operators to over-provision expensive GPU clusters that must handle both workloads simultaneously, even when demand for each fluctuates independently.

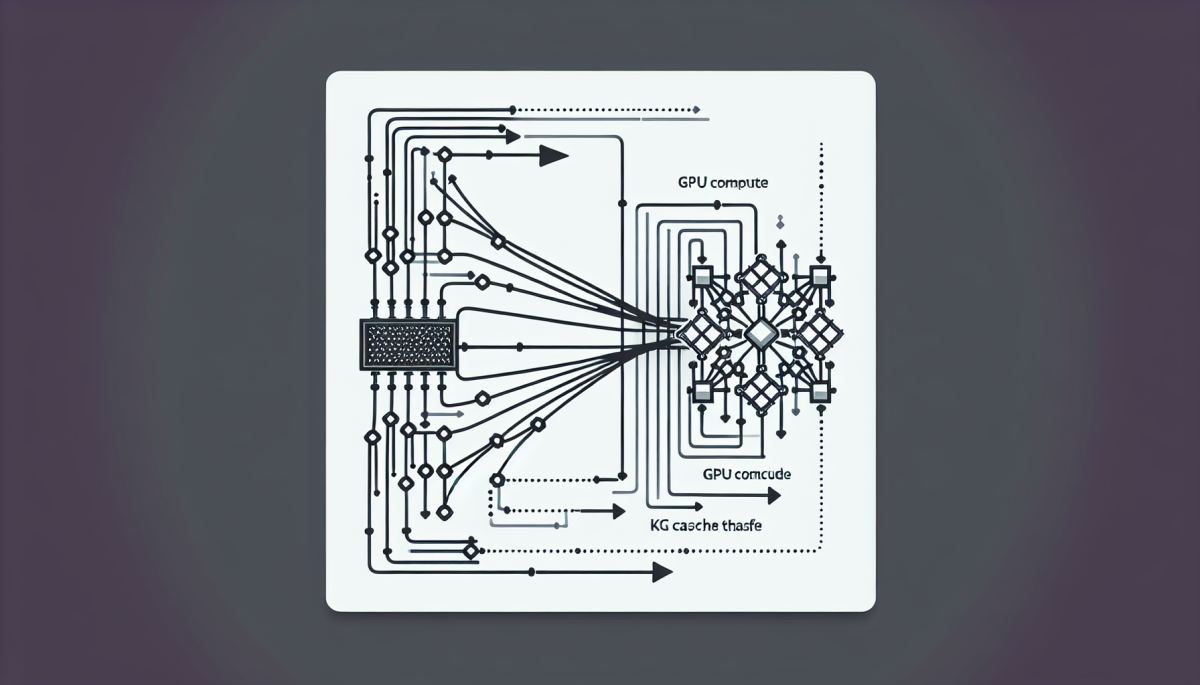

The bottleneck that has kept these two phases locked together is the KV cache, the intermediate memory state a model generates during prefill that decode then reads from continuously. Transferring that cache across datacenters over standard internet links has historically been too slow to be practical. PrfaaS addresses this directly by designing a cross-datacenter KVCache architecture that makes remote prefill viable, allowing the prefill computation to happen somewhere geographically separate from where the decode tokens are being streamed back to the user.

The implications here are not purely technical. The AI inference market is under enormous cost pressure. Companies like Moonshot AI, which operates the Kimi conversational AI platform in China, are competing in an environment where inference efficiency is increasingly the margin between profit and loss. OpenAI, Anthropic, Google, and a growing field of open-source model operators are all racing to reduce the per-token cost of serving responses at scale. Disaggregating prefill from decode, if PrfaaS delivers on its promise, could allow operators to run prefill jobs on cheaper, more available GPU capacity in one region while keeping decode latency low by placing that phase closer to end users.

This is essentially applying the logic of cloud microservices to AI inference. Just as web applications long ago separated stateless compute from stateful storage and distributed them across availability zones, PrfaaS proposes treating the two phases of LLM inference as independently schedulable services. The KVCache becomes the handoff artifact, a data structure that travels between facilities rather than staying pinned to local memory.

The challenge, and it is a real one, is that KV caches are large. For a model handling a long context window, the cache can run into gigabytes. Sending that across even a fast inter-datacenter link introduces latency that could degrade the user experience in ways that matter. The Moonshot and Tsinghua team's contribution is in making that transfer efficient enough to be practical, though the full technical details of how they achieve this are still emerging from the research.

If cross-datacenter prefill becomes a standard pattern, the downstream effects on AI infrastructure could be significant. Hyperscalers and colocation providers that have been building dense, RDMA-connected GPU clusters as the assumed unit of LLM serving may find that assumption loosening. A world where prefill can be farmed out to wherever spare GPU capacity exists, including smaller regional datacenters or even spot-instance clouds, changes the calculus for how AI compute gets procured and priced.

There is also a geopolitical dimension worth watching. Moonshot AI operates in China, where access to the most advanced GPU hardware is constrained by U.S. export controls. Architectures that extract more efficiency from distributed, heterogeneous hardware, rather than requiring the densest possible single-site cluster, have an obvious strategic appeal in that context. PrfaaS may be solving a universal infrastructure problem, but the incentive to solve it is sharpest where hardware scarcity is most acute.

For the broader AI industry, the more interesting question is whether disaggregated inference opens a path toward a genuinely distributed AI serving layer, one where no single datacenter needs to hold the entire computational graph of a model run. That would represent a structural shift in how AI capacity is built and owned, and the researchers at Moonshot and Tsinghua may have just sketched one of the first credible blueprints for getting there.

References

- Shoeybi et al. (2019) — Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism

- Kwon et al. (2023) — Efficient Memory Management for Large Language Model Serving with PagedAttention

- Pope et al. (2022) — Efficiently Scaling Transformer Inference

- Agrawal et al. (2024) — Taming Throughput-Latency Tradeoff in LLM Inference with Sarathi-Serve

Discussion (0)

Be the first to comment.

Leave a comment