Andrej Karpathy has a habit of naming things before the rest of the field knows it needs a name. He coined "vibe coding" earlier this year, and now he's quietly describing a knowledge management approach that could reshape how developers think about AI memory altogether. In a post on X, Karpathy outlined what he calls an "LLM Knowledge Base" — a persistent, evolving library of markdown files that an AI actively maintains, updates, and consults across sessions. It's a deceptively simple idea with surprisingly deep implications.

The core problem Karpathy is solving is one that anyone who has spent serious time with large language models will recognize immediately. LLMs are stateless by default. Every new conversation begins from zero. You can paste in context, sure, but that workaround hits a wall fast — context windows are finite, and cramming them with background material is both inefficient and brittle. The dominant industry solution to this has been Retrieval-Augmented Generation, or RAG, which chunks documents into vector embeddings and retrieves relevant pieces at query time. RAG works, but it introduces its own complexity: embedding pipelines, vector databases, retrieval tuning, and a fundamental tension between what the retrieval system thinks is relevant and what the model actually needs.

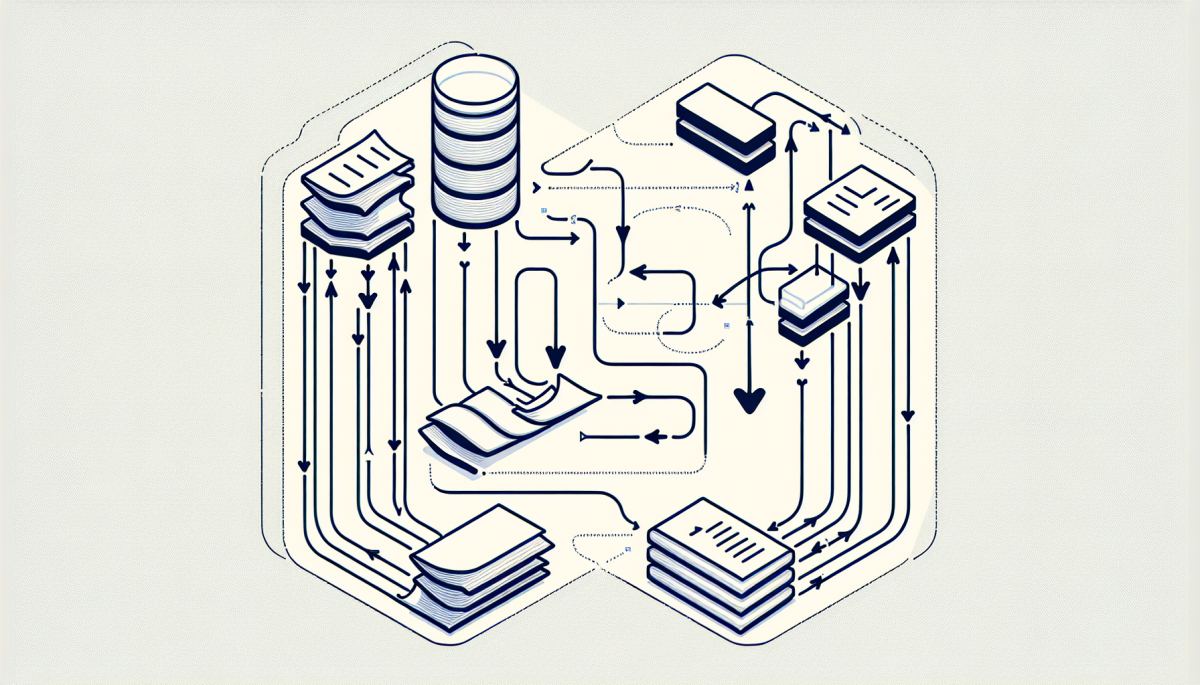

Karpathy's approach sidesteps that entire stack. Instead of indexing documents for retrieval, he maintains a set of markdown files — one per topic or project — that the LLM itself keeps updated. As work progresses, the AI rewrites and extends these files to reflect new findings, decisions, and open questions. The next session starts by loading the relevant file, giving the model a curated, human-readable, continuously refined briefing rather than a pile of raw retrieved chunks.

The elegance here is not just technical — it's cognitive. RAG systems are optimized for retrieval, but retrieval is not the same as understanding. A vector search can surface the paragraph where you mentioned a particular API, but it cannot surface the reasoning behind why you chose that API over three alternatives, or the half-formed concern you noted two weeks ago that turned out to matter. Markdown files maintained by an LLM can capture exactly that kind of structured, evolving reasoning. They're closer to a lab notebook than a search index.

There's also a compounding effect worth noting. Each time the AI updates the knowledge base, it is performing a kind of active synthesis — not just storing information but reorganizing it in light of what's new. Over time, the file becomes denser and more useful, not just longer. This is qualitatively different from a RAG corpus, which grows by accumulation rather than by refinement. The knowledge base approach essentially builds a feedback loop between the AI's outputs and its future inputs, which is a meaningful architectural shift.

Karpathy is not the first person to notice that plain text files can be powerful. The Zettelkasten method, the org-mode ecosystem in Emacs, and tools like Obsidian have all built communities around the idea that a well-maintained personal knowledge graph compounds in value over time. What Karpathy is doing is automating the maintenance layer — letting the LLM do the filing, the cross-referencing, and the summarizing that humans find tedious and therefore skip.

If this pattern spreads — and given Karpathy's influence in the developer community, it very likely will — the downstream effects on the AI tooling market could be significant. The RAG ecosystem has attracted substantial investment and engineering effort. Companies like Pinecone, Weaviate, and Chroma have built businesses around vector storage infrastructure. A shift toward LLM-maintained markdown libraries would not eliminate those businesses overnight, but it would erode one of their core use cases: personal and project-level knowledge management for individual developers and small teams.

More broadly, this approach raises a question about where AI memory should live. Cloud providers want memory to live in their managed services. Open-source advocates want it in local files you control. Karpathy's architecture is firmly in the latter camp — portable, auditable, version-controllable with Git, and entirely independent of any vendor's embedding format or retrieval API. That's not a coincidence coming from someone who has been publicly enthusiastic about local and open AI development.

The deeper systems consequence is about trust and legibility. When an AI maintains your knowledge base, you can read what it wrote. You can edit it, disagree with it, and correct it. That transparency is harder to achieve with a vector database, where the "memory" exists as floating-point numbers that no human can inspect directly. As AI systems take on more autonomous roles in research and development workflows, the question of whether their memory is legible to the humans working alongside them may turn out to matter more than anyone currently expects.

Karpathy has a track record of identifying the simple idea hiding behind the complicated solution. It would be unwise to assume this one is any different.

Discussion (0)

Be the first to comment.

Leave a comment