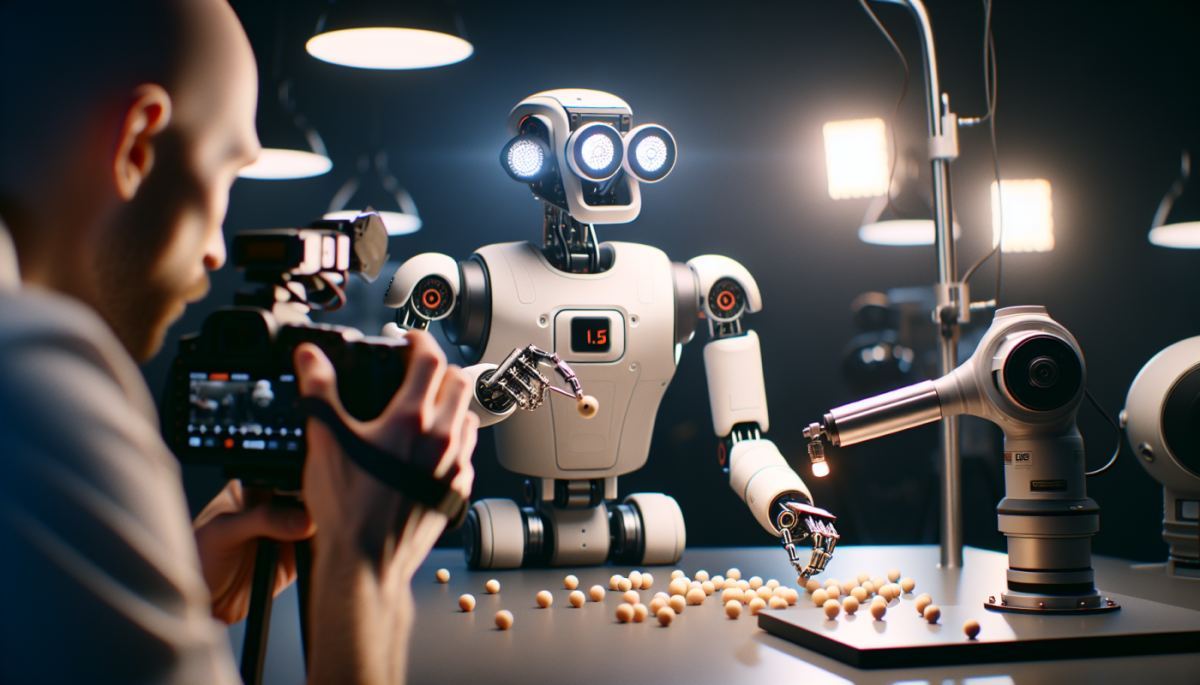

There is a particular kind of intelligence that humans take entirely for granted: the ability to look at a cluttered kitchen counter, decide what needs to happen, pick up the right object, and do something useful with it. For decades, that mundane competence has been the wall that robotics could not scale. Gemini Robotics 1.5 is Google DeepMind's most serious attempt yet to knock it down.

The model represents a meaningful shift in how AI systems interact with the physical world. Rather than simply processing language or generating images, Gemini Robotics 1.5 is designed to power what DeepMind calls "physical agents" — robots capable of perceiving their environment, forming a plan, using tools, and executing multi-step tasks in the real world. The language of the announcement is deliberate: perceive, plan, think, use tools, act. That sequence is not accidental. It mirrors the cognitive loop that underlies most human physical work, and encoding it into a machine system is considerably harder than it sounds.

What makes this moment distinct from previous robotics milestones is the role of the underlying model. Earlier generations of industrial robots were programmed for specific, repetitive tasks in controlled environments. They were fast and precise but brittle — change the environment slightly and they failed. The promise of foundation-model-driven robotics is generalization: a system that can handle novel situations because it has learned something closer to general reasoning, not just pattern-matching against a fixed script.

The gap between digital AI and physical AI is not merely technical — it is architectural. A language model operates in a forgiving medium. Words do not fall off tables. Sentences do not require grip strength calibration. The physical world introduces what roboticists call the "embodiment problem": the system must continuously reconcile what it perceives with what it intends, and then translate that intention into precise motor commands in real time, under conditions that are never perfectly predictable.

Gemini Robotics 1.5 addresses this by integrating perception, planning, and action within a single model framework rather than stitching together separate systems for vision, reasoning, and motor control. That integration matters enormously. When perception and planning are handled by different systems, latency and translation errors accumulate. A unified model can, in theory, reason about what it sees and what it should do in a tighter, faster loop — closer to how biological intelligence actually works.

The emphasis on "complex, multi-step tasks" in DeepMind's framing is also worth pausing on. Single-step robotic actions — pick up this object, move it here — have been achievable for years. The hard problem is chaining actions together coherently over time, adapting when something goes wrong mid-sequence, and understanding the goal well enough to improvise. That is the territory Gemini Robotics 1.5 is entering.

The immediate applications most people imagine are warehouse logistics, manufacturing, and elder care — sectors where labor shortages are acute and the tasks, while physically demanding, follow recognizable patterns. But the more consequential second-order effect may be less visible: the compression of the prototyping cycle for physical products and infrastructure.

If robots can be directed through natural language and general reasoning rather than custom programming, the cost and time required to deploy automation in new contexts collapses. A small manufacturer that previously could not afford bespoke robotic integration might suddenly find general-purpose physical agents within reach. That democratization of physical automation could reshape labor markets in ways that are geographically and sectorally uneven — hitting mid-sized industrial towns and service economies in ways that urban tech hubs will not immediately feel.

There is also a feedback loop worth tracking between physical AI capability and data generation. Every task a physical agent completes in the real world produces new training signal — richer, messier, and more grounded than synthetic data. As these systems deploy at scale, they will generate the very data needed to make the next generation of models more capable. The improvement curve for physical AI may therefore steepen faster than most forecasts currently assume.

What Google DeepMind is betting on, ultimately, is that the same scaling dynamics that transformed language and image generation will eventually transform robotics. The history of AI suggests that bet is not unreasonable. The history of the physical world suggests the road will be longer and stranger than the press releases imply — but the direction of travel is now unmistakable.

Discussion (0)

Be the first to comment.

Leave a comment